What a medical educator should know about the new AI model, o1?

OpenAI announced PhD-student level AI model, named "o1", on 12 September 2024. Here is a brief explanation for you on what makes it special.

Dear Medical Educator,

Those fellows who follow MedEdFlamingo heard in March 2024 that this new model with higher reasoning capabilites is coming.

Although we don’t exactly know the new model includes Q*, the new version has significantly higher reasoning capabilites than OpenAI’s previous frontier model, GPT-4o.

What made the new model more successful in reasoning?

Those who read 10 beginner level practical tips for using AI could remember the good prompting technique: If you add “think step-by-step” to your prompt, it gives better outputs.

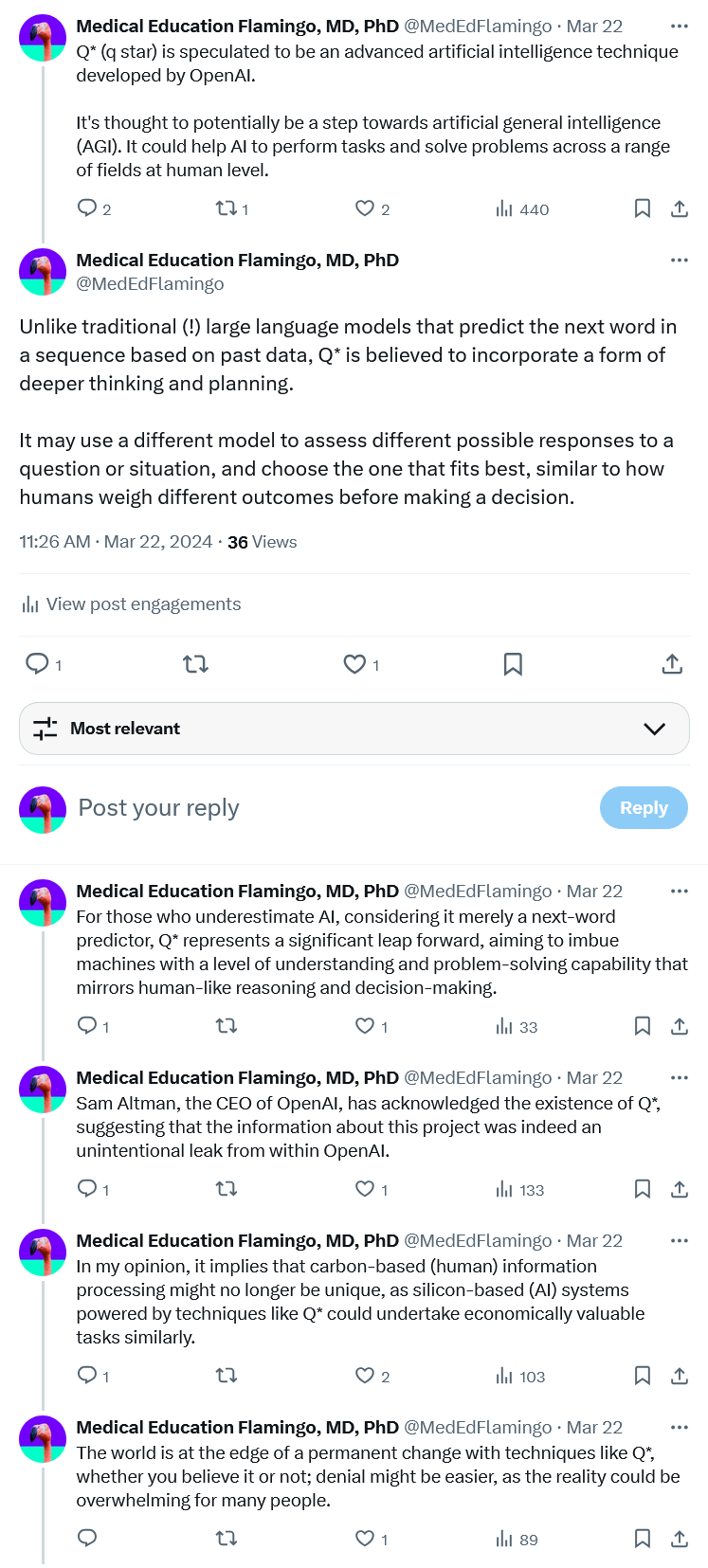

Given this, OpenAI trained the model to think step-by-step natively. This approach is similar to how humans think before answering a question rather than responding immediately. The model uses chains of thought, providing you with a summary of its internal “thoughts” before generating a response to your prompt. Below is an example of this thought process:

However, I don’t recommend you to immediately switch to o1 for every tasks. Here is why:

It is not available for free users. As a paid user of ChatGPT, you also have a weekly usage limit of 30 prompts [update, 17 Sep 2024: increased to 50 prompts], regardless of the complexity or length of each prompt. It's advisable to reserve this usage for high-level reasoning tasks.

Although its price is expected to decrease over time, it is currently 100 times more expensive than previous models. If your task justifies paying this, go ahead and use it. If not, consider using other versions or alternative models, as ChatGPT is not the only AI option available—there are other good alternatives.

It is significantly slower than the previous versions. If the task you provided was handled by o1 in a few seconds, you should have used the previous versions.

What tasks are better for using o1?

Creative Tasks: Writing mnemonic poems to help students remember complex medical terms or processes.

Philosophical Reasoning: Analyzing ethical dilemmas in patient care to facilitate classroom discussions on medical ethics.

Deciphering Complex Ciphers: Interpreting and diagnosing unusual case studies or rare diseases by identifying subtle symptoms.

Automating Processes: Developing a system to assign medical educators to student assignments based on their expertise and the topics. Here is a similar good example. An o1 version of this can handle more complex version of the assignment task.

If you find this helpful, consider subscribing to my newsletter for more tips directly in your inbox. The newsletter is dedicated to enhancing your teaching and assessment practices with the latest AI tools and more.

If you already subscribed, help your colleagues to know by sharing this article.

Yavuz Selim Kıyak, MD, PhD (aka MedEdFlamingo)

Follow the flamingo on X (Twitter) at @MedEdFlamingo for daily content.

Subscribe to the flamingo’s YouTube channel.

LinkedIn is another option to follow.

Who is the flamingo?

Related reading:

Kıyak, Y. S., & Kononowicz, A. A. (2024). Case-based MCQ generator: A custom ChatGPT based on published prompts in the literature for automatic item generation. Medical Teacher, 1-3. https://www.tandfonline.com/doi/full/10.1080/0142159X.2024.2314723

Kıyak, Y. S., & Emekli, E. (2024). ChatGPT prompts for generating multiple-choice questions in medical education and evidence on their validity: a literature review. Postgraduate medical journal, qgae065. https://academic.oup.com/pmj/advance-article/doi/10.1093/postmj/qgae065/7688383

Kıyak, Y. S., & Emekli, E. (2024). A Prompt for Generating Script Concordance Test Using ChatGPT, Claude, and Llama Large Language Model Chatbots. Revista Española de Educación Médica, 5(3). https://revistas.um.es/edumed/article/view/612381